Features

Latest Features

How a custom SG gifted from The Mars Volta and a voice memo fed into Blackwater Holylight’s doomy shoegaze masterpiece

By Jason T. Mays published

The Portland trio started out playing house parties. But a move to LA helped them evolve into an atmospheric powerhouse that’s the missing link between Deftones and Radiohead

How an ’80s session guitar icon accidentally ended up with the ultimate Prince amp

By Andrew Daly published

Eddie Martinez was looking for a backup, and a certain Mesa/Boogie caught his eye. Little did he know, it would turn out to be a legendary gear treasure

"The bush can make you whacky, especially if you’re running from a few coppers who are shooting at you": William Crighton on bush psychedelia, collaborating with the late Rob Hirst, and his new LP Colonial Drift

By Shaun Prescott published

Guitarists The Australian singer songwriter talks the gestation and creation of his fourth album.

The holy grail of tone – three amps that built the sound of recorded guitar

By Charlie Wilkins published

From shimmering cleans to full throttle roar, these three classic amplifiers helped shape the sound of modern music and still define what great guitar tone means today

The story of Joe Perry’s ’59 Les Paul Standard – his lost treasure and ultimate birthday gift

By Andrew Daly published

Joe Perry was forced to part with his beloved 'Burst in 1979, but it was his Aerosmith bandmate who found it again

How Butch Walker’s ear for killer tone took him on a journey from obscurity to in-demand producer

By Jenna Scaramanga published

From Taylor Swift to Green Day, he’s worked with the world’s biggest stars, and still managed to make 16 of his own records – and, now, his own signature amp with Divided by 13

With the help of a stunning vintage Gibson acoustic, Jussi Reijonen is giving Western folk an Arabian makeover

By Amit Sharma published

The Finnish fingerstylist's acoustic reveries are essential listening for fans of Paco de Lucía, Omar Khorshid, and Lenny Breau

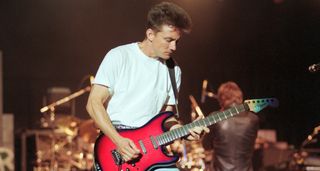

Back to the Future made them a household name, now they needed a followup – the making of Huey Lewis and the News’ last big rock ’n’ roll guitar record

By Joe Matera published

By 1986, the heat was on. The success of the Power of Love required an artistic statement and fast. Guitarist Chris Hayes looks back on the making of Fore!, when Huey Lewis and co rose to the challenge

How to dial in any guitar amp and find your sound

By Charlie Wilkins published

Great tone doesn’t need a guitar tech and a stadium rig. Here’s how you can get the most out of your amp in any situation...

How Gibson made the ultimate tribute to David Bowie guitarist Mick Ronson

By Neville Marten published

This Collector’s Edition replica of Ronson’s Bowie-era ’68 Les Paul Custom transports you spiritually – and tonally – to the glory days of the Spiders from Mars (Hull) – but it does not come cheap

All the latest guitar news, interviews, lessons, reviews, deals and more, direct to your inbox!